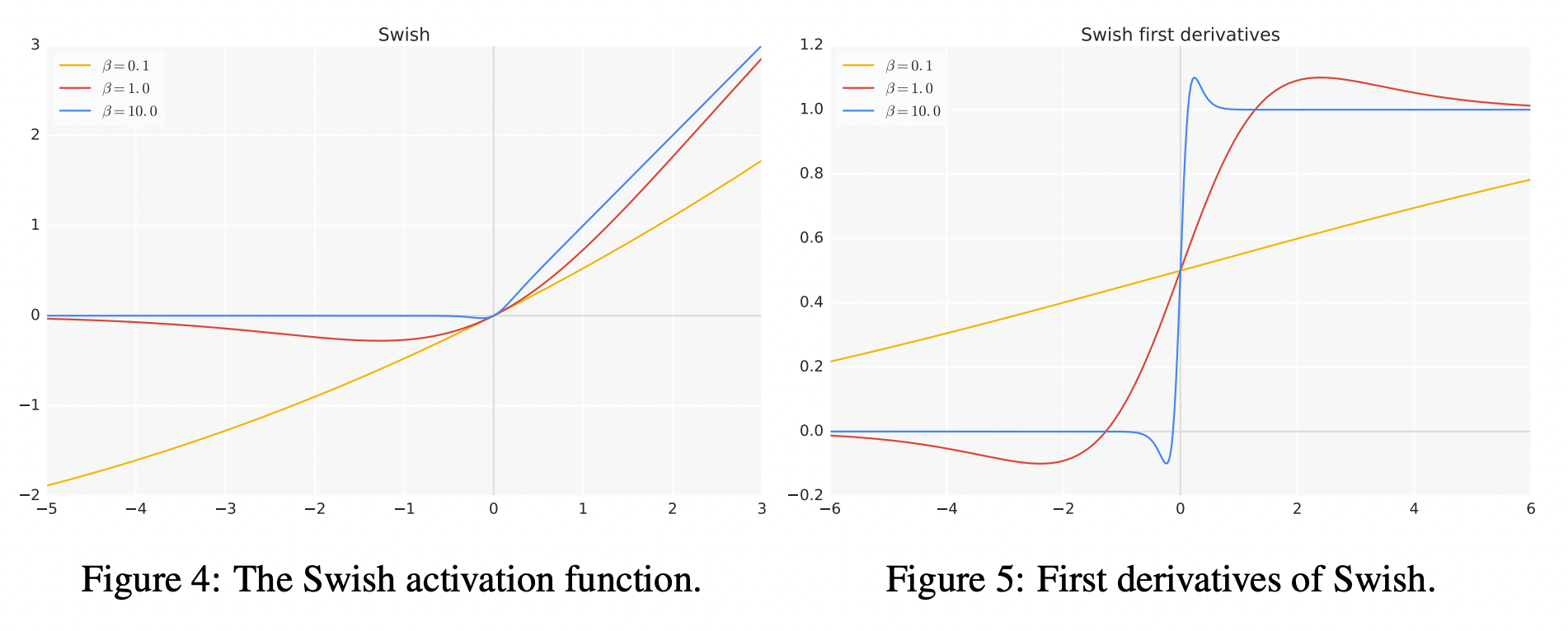

Also, image augmentation techniques are used to generate an Apple Leaf Disease Identification Data set (ALDID), which contains 81,700 images. Afterward, 5,170 images are collected in the field environment at the apple planting base of the Northwest A&F University, while 3,000 images are acquired from the PlantVillage public data set. Then, a depth-wise separable convolution is applied to the convolution module to reduce the number of parameters, and the h-swish activation function is introduced to achieve the fast and easy to quantify the process. First, a coordinate attention block is integrated into the EfficientNet-B4 network, which embedded the spatial location information of the feature by channel attention to ensure that the model can learn both the channel and spatial location information of important features. In order to improve detection accuracy and efficiency, a deep learning model, which is called the Coordination Attention EfficientNet (CA-ENet), is proposed to identify different apple diseases. It is an important development in the field of machine learning and is likely to become even more popular in the future.The accurate identification of apple leaf diseases is of great significance for controlling the spread of diseases and ensuring the healthy and stable development of the apple industry. Hard Swish improves the efficiency of machine learning algorithms, making them easier to use and more effective. It simplifies the formula by replacing the sigmoid function with a piecewise linear function. Hard Swish is a type of activation function that is based on the Swish formula. This means that developers can quickly and easily implement Hard Swish in their machine learning applications without needing to make significant changes to their code. Machine learning algorithms can easily be updated to use Hard Swish as an activation function without requiring major changes to the algorithm's architecture. Hard Swish is also important because it is easy to use. This makes the algorithms more efficient, which can lead to better performance and faster model training times. By replacing the sigmoid function with a simpler function, Hard Swish reduces the amount of computation required to run a machine learning algorithm. The Hard Swish activation function is important because it improves the efficiency of machine learning algorithms. It was developed to improve the efficiency of machine learning algorithms and make them easier to use. The Hard Swish formula is designed to be easier to compute than the original Swish formula. ReLU is another popular activation function that is commonly used in machine learning applications. In this formula, x is the input value to the activation function, and ReLU6 is a variation of the Rectified Linear Unit (ReLU) function. Swish is also a smooth function that produces an output between 0 and 1, but it is a little more complicated. The sigmoid function is commonly used in machine learning because it is a smooth function that produces an output between 0 and 1. The Swish activation function is based on a formula that is similar to the standard sigmoid function. It was first introduced in 2017 by researchers at Google. Swish is a recently developed activation function that has been shown to be highly effective in machine learning applications. There are several different types of activation functions, each of which is used for different purposes. The activation function determines whether the output of the neuron is activated or not.Īctivation functions play a crucial role in machine learning algorithms because they help to determine the accuracy of the output of a machine learning model. Artificial neurons are mathematical models that are used to simulate the behavior of real neurons found in the human brain. In machine learning, an activation function is used to determine the output of artificial neurons. What is an Activation Function?īefore discussing Hard Swish, it is important to understand what an activation function is. Hard Swish is a variation of Swish that replaces a complicated formula with a simpler one. Swish is a mathematical formula that is used to help machines learn, and it is an important component of machine learning algorithms. Hard Swish is a type of activation function that is based on a concept called Swish.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed